AI-Agents 05

The Architecture of Endurance: Long-Running Context Persistence and Context Optimization in Agentic Systems

Michael Schöffel

March 9, 202615 min. read

Content

Summary

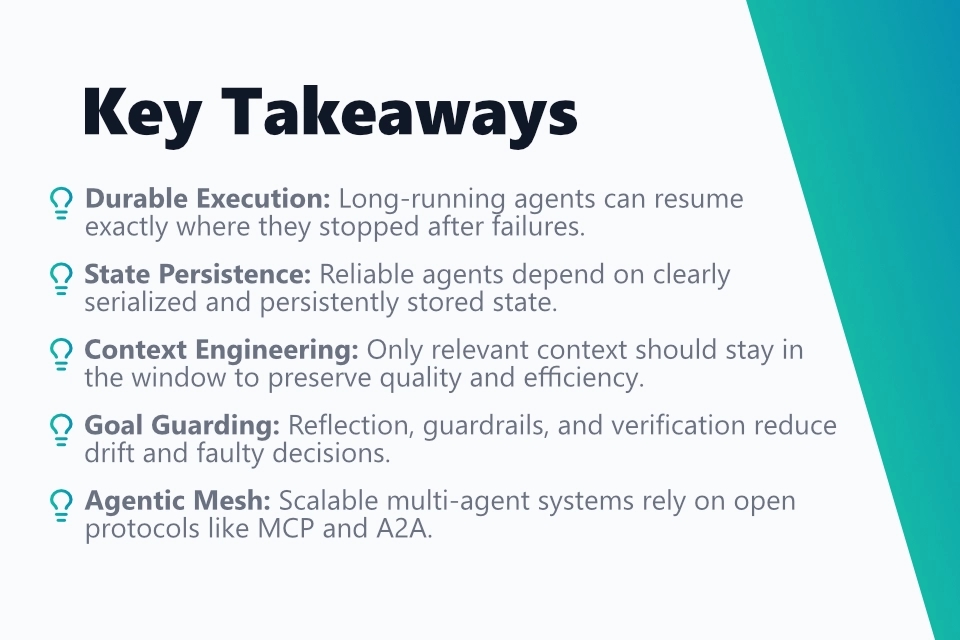

The transition from stateless AI interactions to durable, autonomous agents requires robust architectures for state management. Techniques such as Durable Execution (for example, Temporal.io) and the Virtual Actor Model ensure that agents can operate consistently for weeks and resume exactly where they were interrupted after system failures. Approaches such as InfiAgent complement this with a strict separation between ephemeral reasoning context and persistent, file-based storage, which preserves cognitive stability during complex long-horizon tasks even when context windows are limited.

To optimize token economy and avoid performance degradation, modern systems rely on context engineering and standards such as the Model Context Protocol (MCP). Methods such as cognitive distillation, graph-based pruning, and dynamic token budgeting minimize "mental drift" - the risk that agents lose sight of the goal during long chains of reasoning. The integration of formal verification through mathematical proofs (for example, FormalJudge) also makes it possible to significantly improve the correctness of reasoning chains in sensitive domains such as cybersecurity.

Horizontal scaling is achieved through an agentic mesh and standardized Agent-to-Agent (A2A) protocols, enabling cross-vendor collaboration among specialized agents in swarm-like structures. Blackboard architectures promote loose coupling and resilience, while human-in-the-loop interfaces provide controlled autonomy. Overall, the focus is shifting away from pure model output toward a broader systems engineering discipline that treats persistence, security, and interoperability as foundational pillars of durable AI systems.

Introduction

The evolution of artificial intelligence has reached a critical turning point where the mere generation of text through stateless interactions is giving way to a new era: the era of durable, autonomous AI agents. While earlier iterations of AI systems primarily acted as reactive oracles that answered isolated prompts, modern use cases demand systems that can make consistent progress over days, weeks, or even months. This shift from short-lived chat sessions to "long-running AI agentic systems" presents engineers with fundamental challenges related to memory, state management, and cognitive stability. An agent that takes on complex tasks such as penetration testing or large-scale software development must not lose all of its knowledge whenever the internet connection drops or the infrastructure restarts. The ability to maintain coherent context over extremely long periods is the difference between a toy and a productive tool [1].

State Serialization: From Ephemeral Snapshot to "Durable Agent"

In classical software development, "state" is often a clearly defined variable in memory. In AI agents, however, state consists of a highly complex blend of the current plan, conversation history, outputs from tools, and the model's internal reflections. To make an agent "durable," this state must be serialized in a way that allows it to be frozen at any time and brought back to life later on another machine or at a later point in time.

Structured Serialization with JSON and Pydantic

The first step toward persistence is converting the agent's mental model into a machine-readable format. Frameworks such as Pydantic have become the industry standard for this task. Pydantic makes it possible to define complex data structures through Python classes that enforce strict typing and validation. This is critical for agents, because faulty serialization could cause the agent to wake up after a restart with inconsistent information. For example, if an agent running a pentest with PentestGPT stores a list of discovered vulnerabilities, Pydantic-based serialization ensures that each vulnerability retains the correct attributes such as severity, URL, and exploit status [2]. A simple "snapshotting" approach - saving the state at the end of each interaction - is often not enough. In complex systems, an agent can crash in the middle of a long-running computation or API call. This is where the concept of the "durable agent" becomes central. A durable agent stores not only the result of its work, but the entire execution path [1].

The following example shows how an agent state can be typed, validated, and serialized without losing information:

1from datetime import datetime

2from pathlib import Path

3from typing import Literal

4

5from pydantic import BaseModel, Field

6

7

8class Finding(BaseModel):

9 # Every vulnerability is strictly typed and validatable.

10 severity: Literal["low", "medium", "high", "critical"]

11 url: str

12 exploit_status: Literal["new", "validated", "blocked"] = "new"

13

14

15class AgentState(BaseModel):

16 # The serialized state contains plan, history, and operational metadata.

17 agent_id: str

18 goal: str

19 current_step: str

20 findings: list[Finding] = Field(default_factory=list)

21 last_checkpoint_at: datetime = Field(default_factory=datetime.utcnow)

22

23

24state = AgentState(

25 agent_id="pentest-42",

26 goal="Check the target system for SQL injection and weak authentication.",

27 current_step="Analyze login flow",

28 findings=[

29 Finding(

30 severity="high",

31 url="https://target.local/login",

32 exploit_status="validated",

33 )

34 ],

35)

36

37# JSON is not just an exchange format here, but also a restart contract.

38snapshot_path = Path("state/agent_state.json")

39snapshot_path.parent.mkdir(parents=True, exist_ok=True)

40snapshot_path.write_text(state.model_dump_json(indent=2), encoding="utf-8")

41

42# On restart, the exact state is loaded again and revalidated.

43restored_state = AgentState.model_validate_json(snapshot_path.read_text(encoding="utf-8"))

44print(restored_state.current_step)The matching flow diagram highlights the separation between runtime, serialization, and recovery:

Durable Execution Frameworks: Temporal.io and Workflow Persistence

Durable execution frameworks such as Temporal.io represent a paradigm shift in agent orchestration. Instead of treating state as a separate object that must be moved back and forth between the database and the model, Temporal integrates state directly into workflow code. When an agent executes a task, every step - every LLM call, every tool invocation - is recorded in an immutable event history [3], [4]. The decisive advantage of this architecture is fault tolerance. If the server running the agent fails, Temporal can continue the workflow on another server at exactly the point where it was interrupted. It does so through replay: the code runs again, but instead of repeating the API calls, the stored results are loaded from history. For a long-running agent, this means it never "forgets" where it is, even when the underlying infrastructure is unstable [4].

The core element of durable execution is not a stored Python stack, but deterministic workflow replay based on event history:

A simplified Temporal workflow makes the practical difference between normal code and durable code visible:

1from datetime import timedelta

2

3from temporalio import workflow

4

5

6@workflow.defn

7class SecurityReviewWorkflow:

8 @workflow.run

9 async def run(self, target_url: str) -> dict:

10 # Every activity is a resumable step in the workflow.

11 scan_result = await workflow.execute_activity(

12 "run_port_scan",

13 target_url,

14 start_to_close_timeout=timedelta(minutes=5),

15 )

16

17 # The LLM call also sits behind an activity boundary.

18 triage = await workflow.execute_activity(

19 "triage_findings_with_llm",

20 scan_result,

21 start_to_close_timeout=timedelta(minutes=2),

22 )

23

24 # If the worker fails here, Temporal replays the workflow.

25 # Completed activities are loaded from event history.

26 report = await workflow.execute_activity(

27 "build_report",

28 triage,

29 start_to_close_timeout=timedelta(minutes=2),

30 )

31 return report| Persistence Model | Mechanism | Advantage | Drawback |

|---|---|---|---|

| Stateless Scripting | No persistence | Simple to implement | Total loss on crash |

| Snapshotting (Redis/SQL) | Manual save at the end of each turn | Low latency | Data loss between snapshots |

| Durable Execution (Temporal) | Event sourcing / log replay | Maximum reliability | More complex infrastructure |

| Virtual Actor Model (Dapr) | Location-transparent objects | Excellent scalability | Requires a specialized programming model |

The Virtual Actor Model and the Abstraction of Identity

Another groundbreaking concept for scaling durable agents is the Virtual Actor Model, as used in frameworks such as Microsoft Orleans or Dapr. In this model, each agent is treated as an "actor" - a standalone unit with its own state and behavior. A "virtual" actor always exists conceptually. If it is inactive, its state is put to sleep in a database (for example, PostgreSQL or Redis). As soon as a new message arrives for that agent, the framework automatically activates it, loads its state, and the actor resumes work [5], [6]. This architecture makes it possible to manage millions of agents simultaneously without having them consume resources permanently. In practice, that means a system such as CAI (Cybersecurity AI) can instantiate a dedicated actor for every target being tested, with each actor exploring its environment over days without the developer having to manage sessions directly [5], [6], [7].

The actor's identity remains stable even if its process instance comes and goes:

In Python-like pseudocode, the activation model can be read like this:

1class TargetActor:

2 def __init__(self, actor_id: str, state_store):

3 self.actor_id = actor_id

4 self.state_store = state_store

5 self.state = None

6

7 async def activate(self) -> None:

8 # The last state is loaded during activation.

9 self.state = await self.state_store.load(self.actor_id) or {

10 "visited_hosts": [],

11 "current_phase": "recon",

12 }

13

14 async def handle_message(self, message: dict) -> dict:

15 # The actor only handles messages for its own identity.

16 if message["type"] == "host_discovered":

17 self.state["visited_hosts"].append(message["host"])

18

19 result = {

20 "actor_id": self.actor_id,

21 "phase": self.state["current_phase"],

22 "known_hosts": len(self.state["visited_hosts"]),

23 }

24

25 # The new state is persisted before deactivation.

26 await self.state_store.save(self.actor_id, self.state)

27 return resultInfiAgent and State Externalization into the File System

A more radical approach to solving the persistence problem appears in the InfiAgent framework from the University of Hong Kong. The researchers observed that accumulating information in the prompt (the context window) inevitably leads to "context rot" - a degradation of model performance as token count increases. InfiAgent therefore proposes a strict separation between persistent task state and ephemeral reasoning context [8]. Instead of carrying the entire history inside the prompt, InfiAgent uses a file-centric state abstraction. The agent treats a dedicated directory in the file system as its long-term memory. At each decision step, the agent reconstructs its working context from a snapshot of that directory and a small fixed window of recent actions. This ensures that the LLM's context window never overflows, no matter how long the task runs. Experiments show that this approach remains significantly more stable than traditional context-centric architectures on complex deep-research tasks spanning hundreds of steps [8].

Here is the central idea: long-term memory does not live in the prompt, but in the agent's working directory:

A minimal Python example shows the separation between persistent files and the ephemeral prompt window:

1from pathlib import Path

2import json

3

4

5WORKDIR = Path("agent_workspace")

6WORKDIR.mkdir(exist_ok=True)

7

8

9def reconstruct_context(last_actions: list[str]) -> str:

10 # Only durable artifacts from the file system are read as the factual basis.

11 notes = (WORKDIR / "notes.md").read_text(encoding="utf-8") if (WORKDIR / "notes.md").exists() else ""

12 findings = json.loads((WORKDIR / "findings.json").read_text(encoding="utf-8")) if (WORKDIR / "findings.json").exists() else []

13

14 # The prompt window remains small and reproducible.

15 return "\n".join(

16 [

17 "SYSTEM: Work only with current project facts.",

18 f"NOTES:\n{notes}",

19 f"FINDINGS:\n{json.dumps(findings, ensure_ascii=False, indent=2)}",

20 "RECENT ACTIONS:",

21 *last_actions[-5:],

22 ]

23 )

24

25

26def persist_new_fact(title: str, content: str) -> None:

27 # New insights are written permanently outside the LLM context.

28 with (WORKDIR / "notes.md").open("a", encoding="utf-8") as handle:

29 handle.write(f"\n## {title}\n{content}\n")Context Routing & Selective Attention: The Art of Token Economy

Even if we can store an agent's state perfectly, the problem of context limitation remains. An LLM can process only a limited number of tokens at the same time. In that sense, context is the agent's "working memory" (RAM), while the persistence layer acts as its "hard drive." Effective context engineering therefore means selecting exactly the tokens that are most relevant to the current decision at any given moment.

The Model Context Protocol (MCP) as a Universal Standard

To standardize access to external context, the Model Context Protocol (MCP) has become a key building block. MCP decouples the AI application from the data sources. Through MCP servers, an agent can access databases, file systems, or APIs without having to keep that information in the prompt at all times. This enables just-in-time context loading: the agent requests specific information only when it needs it for a reasoning step [9], [10].

A diagram clearly shows the difference between a statically overloaded prompt and targeted context retrieval:

A Python example with an MCP-like client illustrates the flow:

1async def load_relevant_context(mcp_client, target_id: str, phase: str) -> dict:

2 # The agent does not ask for everything, only for context needed right now.

3 response = await mcp_client.call_tool(

4 "security_context.lookup",

5 {

6 "target_id": target_id,

7 "phase": phase,

8 "fields": ["open_findings", "recent_decisions", "allowed_actions"],

9 },

10 )

11 return response["structuredContent"]

12

13

14async def plan_next_step(llm, mcp_client, target_id: str) -> str:

15 context = await load_relevant_context(mcp_client, target_id, phase="privilege_escalation")

16

17 # Only the selectively loaded context enters the prompt.

18 prompt = f"""

19 Goal: Plan the next safe step.

20 Open findings: {context['open_findings']}

21 Recent decisions: {context['recent_decisions']}

22 Allowed actions: {context['allowed_actions']}

23 """

24 return await llm.ainvoke(prompt)Context Pruning and Compaction: Cognitive Distillation

When an agent runs for days, its history inevitably becomes too large for the context window. Compaction is the solution. The existing history is summarized by an LLM. A key technical aspect here is distinguishing between unimportant details (for example, raw tool output from a port scan) and critical insights (for example, the identification of a specific vulnerability). Effective compaction algorithms preserve high-fidelity details such as architecture decisions or unresolved bugs while discarding redundant messages [9]. A more advanced approach models the search process as a state graph or attack graph and removes redundant paths or cycles from the trajectory. This keeps the active working set smaller and the search more focused [11].

The practical question, then, is not whether compaction happens, but what must be preserved intentionally:

1HIGH_FIDELITY_TAGS = {"architecture_decision", "validated_finding", "open_risk", "blocked_path"}

2

3

4def compact_history(events: list[dict]) -> tuple[list[dict], str]:

5 # Critical facts are kept as structured facts.

6 preserved = [event for event in events if event.get("tag") in HIGH_FIDELITY_TAGS]

7

8 # Noisy or repetitive events are condensed into a short summary.

9 noisy_events = [event for event in events if event.get("tag") not in HIGH_FIDELITY_TAGS]

10 summary = "\n".join(

11 f"- {event['timestamp']}: {event['message']}" for event in noisy_events[-20:]

12 )

13 return preserved, summary

14

15

16history = [

17 {"tag": "tool_output", "timestamp": "10:00", "message": "Port scan found 6 open ports"},

18 {"tag": "validated_finding", "timestamp": "10:03", "message": "SQL injection on /search confirmed"},

19 {"tag": "blocked_path", "timestamp": "10:08", "message": "RCE attempt blocked by WAF"},

20]

21

22preserved_facts, compact_summary = compact_history(history)Seen as a graph, compaction is fundamentally a reduction to informative nodes and edges:

Dynamic Token Budgeting and the Avoidance of "Overthinking"

An often overlooked problem in durable systems is the cost and latency caused by unnecessarily long reasoning chains. Modern models tend to overthink - they generate thousands of tokens for tasks that would require only a simple answer. Systems such as Certaindex address this through dynamic token budgeting. Certaindex measures reasoning progress and allows an early exit once a stable answer has been found. In multi-agent systems, this enables intelligent resource allocation: an agent working on a highly complex task is given a larger token budget, while routine tasks are completed with minimal effort [12].

A simple control model for dynamic budgets can look like this:

1def allocate_token_budget(task_complexity: float, uncertainty: float, remaining_quota: int) -> int:

2 # Complex and uncertain tasks receive more budget, but never unlimited budget.

3 proposed = int(800 + task_complexity * 2200 + uncertainty * 1800)

4 return max(300, min(proposed, remaining_quota))

5

6

7def should_exit_early(confidence: float, budget_spent: int, max_budget: int) -> bool:

8 # Exit early when answer stability is high to save costs.

9 return confidence >= 0.92 or budget_spent >= max_budget

10

11

12budget = allocate_token_budget(task_complexity=0.7, uncertainty=0.4, remaining_quota=2400)

13print({"assigned_budget": budget, "early_exit": should_exit_early(0.94, 1100, budget)})| Strategy | How It Works | Goal |

|---|---|---|

| Sliding Window | Keeps only the last N tokens | Cost minimization |

| Summarization | LLM summarizes old messages | Preservation of long-term intent |

| Selective RAG | Vector search loads only relevant chunks | Access to massive document sets |

| Speculative Loading | Loads context based on predictions | Reduced latency |

| Context Forcing | Trains the model for long-term consistency | Avoidance of contradictions |

In addition to token management, agentic FinOps is becoming increasingly important: durable workflows need hard quotas at the workflow level. An agent operating autonomously for 48 hours must be moved automatically into a deep freeze state when it reaches its cost limit, rather than consuming resources unchecked.

The "Mental Drift" Problem and Goal Guarding

A durable agent operating autonomously for days is at risk of suffering from a phenomenon known as mental drift. With every step in a long chain of interactions, small inaccuracies or misunderstandings can accumulate until the agent completely loses sight of the original objective. Since LLMs are primarily trained to predict the next token based on statistical patterns rather than to discover truth, this drift is systemic [9].

System Prompt Anchoring and Self-Correction

The simplest countermeasure is anchoring. Here, the original task and the most important rules are placed prominently in the system prompt during every turn. For truly long runtimes, however, this is not enough. The agent needs mechanisms for self-correction. An agent such as LuaN1ao uses a dedicated reflector role for this purpose. The reflector audits the work of the planner and executor and looks for logical errors or signs that the agent has reached a dead end [1], [13].

The role distribution between planner, executor, and reflector can be represented well as a control loop:

A small LangGraph-inspired example shows what this loop looks like in code:

1from typing import TypedDict

2

3

4class AgentLoopState(TypedDict):

5 goal: str

6 plan: str

7 execution_result: str

8 reflection: str

9

10

11def planner(state: AgentLoopState) -> AgentLoopState:

12 # The goal anchor is reconsidered during every planning step.

13 state["plan"] = f"Goal: {state['goal']} | Next step: inspect the app's session handling"

14 return state

15

16

17def executor(state: AgentLoopState) -> AgentLoopState:

18 # In practice, this would be a tool or LLM call.

19 state["execution_result"] = "Session cookie is not HttpOnly"

20 return state

21

22

23def reflector(state: AgentLoopState) -> AgentLoopState:

24 # The reflector checks whether result and goal are still aligned.

25 if "HttpOnly" in state["execution_result"]:

26 state["reflection"] = "No drift: result is relevant to authentication security."

27 else:

28 state["reflection"] = "Drift detected: return to planning."

29 return statePolicy-as-Code and Guardrails

To keep the agent's autonomy within safe boundaries, policy-as-code approaches are used. Systems such as CAI validate agent actions against a predefined set of rules and security boundaries. For example, if a pentesting agent has been instructed never to impair system availability, such a policy layer can prevent it from executing a dangerous denial-of-service exploit even if its internal logic would suggest doing so [7].

Technically, a guardrail layer is nothing more than a mandatory policy check before every action:

1POLICIES = {

2 "allow_destructive_actions": False,

3 "allowed_network_scope": ["10.0.0.0/24", "app.internal.local"],

4}

5

6

7def validate_action(action: dict) -> None:

8 # Policy-as-code makes safety rules explicit and testable.

9 if action["type"] == "dos_test" and not POLICIES["allow_destructive_actions"]:

10 raise PermissionError("DoS tests are forbidden by policy.")

11

12 if action["target"] not in POLICIES["allowed_network_scope"]:

13 raise PermissionError("Target lies outside the approved scope.")

14

15

16candidate_action = {"type": "dos_test", "target": "app.internal.local"}

17validate_action(candidate_action)Formal Verification: The Mathematical Guarantee of Correctness

Arguably the most significant advance in agent reliability during 2025-2026 is the integration of formal verification. Unlike conventional evaluations, which rely on statistical likelihood, formal verification uses mathematical proofs to ensure the correctness of reasoning chains. Systems such as FormalJudge break an agent's reasoning into atomic facts and verify them with SMT solvers (Satisfiability Modulo Theories). An agent that writes code or performs complex security analyses must express its conclusions in a structured language such as Dafny or Lean. The verifier can then mathematically guarantee that the agent's logic contains no contradictions. Studies show that agents guided by formal feedback can increase their accuracy on complex tasks from 70.7% to 99.8% [14].

Formal oversight sits between reasoning and approval:

A heavily simplified Python pattern shows the data shape a verifier needs:

1def extract_atomic_claims(agent_rationale: str) -> list[dict]:

2 # In real systems, this would be a structured parser rather than string splitting.

3 return [

4 {"claim": "input_is_sanitized", "value": False},

5 {"claim": "sql_query_uses_user_input", "value": True},

6 ]

7

8

9def verify_claims_with_solver(claims: list[dict]) -> bool:

10 # A real solver would check logical consistency.

11 facts = {item["claim"]: item["value"] for item in claims}

12 return not (facts["input_is_sanitized"] and facts["sql_query_uses_user_input"])

13

14

15claims = extract_atomic_claims("Unsanitized input reaches SQL query")

16is_consistent = verify_claims_with_solver(claims)

17print({"verified": is_consistent, "claims": claims})Agentic Observability: Understanding the "Ghost in the Machine"

The greatest challenge of durable autonomy is debugging. If an agent goes through thousands of reasoning steps over 72 hours, a traditional stack trace is worthless. Modern architectures therefore rely on reasoning trace analysis and specialized agent observability stacks. They allow us to "rewind" the agent's state at any point in time. We can see not only what the agent did, but also which state snapshot it had in mind at that moment. This kind of "forensics for agents" is the prerequisite for deploying durable systems productively at all in regulated industries [5], [7].

In practice, observability means that every decision must be traceable with prompt version, snapshot, and tool context:

1def log_agent_step(trace_store, *, step_id: str, state_snapshot: dict, prompt_hash: str, tool_call: dict, outcome: str) -> None:

2 trace_store.append(

3 {

4 "step_id": step_id,

5 "prompt_hash": prompt_hash,

6 "state_snapshot": state_snapshot,

7 "tool_call": tool_call,

8 "outcome": outcome,

9 }

10 )

11

12

13trace = []

14log_agent_step(

15 trace,

16 step_id="step-103",

17 state_snapshot={"phase": "enumeration", "open_findings": 2},

18 prompt_hash="sha256:abc123",

19 tool_call={"name": "http_probe", "args": {"path": "/admin"}},

20 outcome="403 Forbidden",

21)Dual-Audit Mechanisms and Hierarchical Supervision

In highly sensitive environments, a dual-audit model is often implemented. Here, two independent agent instances monitor the process. This is comparable to the four-eyes principle in human work. In the PentestGPT v2 architecture, this is complemented by a Task Difficulty Assessment (TDA). TDA estimates the probability of success of a path based on four dimensions [11]:

- Horizon Estimation: How many steps are still required?

- Evidence Confidence: How reliable is the evidence collected so far?

- Context Load: Is the context window already too overloaded for precise reasoning?

- Historical Success: How successful have similar actions been in the past?

When TDA signals that a path is becoming too uncertain, the system enforces backtracking or realignment of the agent [11].

A scoring model for TDA can be expressed as an explicit decision function:

1def estimate_path_risk(horizon: float, evidence_confidence: float, context_load: float, historical_success: float) -> float:

2 # High horizon and high context load increase risk.

3 return (

4 0.35 * horizon

5 + 0.30 * context_load

6 + 0.20 * (1.0 - evidence_confidence)

7 + 0.15 * (1.0 - historical_success)

8 )

9

10

11risk = estimate_path_risk(

12 horizon=0.8,

13 evidence_confidence=0.55,

14 context_load=0.9,

15 historical_success=0.4,

16)

17

18if risk > 0.65:

19 print("Backtracking or human approval required")Scaling Autonomy: Agentic Mesh & Swarms

When a problem becomes so large that a single agent can no longer handle it despite all persistence mechanisms, the system must scale horizontally. This takes us away from monolithic agents and toward distributed systems referred to as an agentic mesh or swarms.

Blackboard Architectures and Loose Coupling

The blackboard architecture is a classic AI design pattern that is experiencing a renaissance in the LLM era. Several specialized agents collaborate by writing information to and reading from a shared blackboard. There is no rigid predefined workflow; instead, agents respond opportunistically to new information. A reconnaissance agent writes a list of open ports to the blackboard, after which an exploit specialist agent picks up that information and searches for vulnerabilities. This loose coupling makes the system extremely resilient to the failure of individual components [15].

A blackboard is especially easy to visualize because collaboration does not happen through direct point-to-point coupling:

The corresponding data model is often intentionally simple so that many agents can work with it at the same time:

1blackboard = {

2 "open_ports": [],

3 "validated_findings": [],

4 "pending_tasks": [],

5}

6

7

8def publish_fact(topic: str, value) -> None:

9 # Each agent publishes only facts or tasks to the shared board.

10 blackboard[topic].append(value)

11

12

13def consume_tasks() -> list[dict]:

14 # A specialized agent pulls only the tasks relevant to it.

15 return [task for task in blackboard["pending_tasks"] if task["owner"] == "exploit-agent"]

16

17

18publish_fact("open_ports", {"host": "10.0.0.15", "port": 443})

19publish_fact("pending_tasks", {"owner": "exploit-agent", "task": "Check TLS configuration"})The Agentic Mesh: The Internet for Agents

An agentic mesh is a distributed infrastructure that connects agents through standardized protocols. It acts as a "cognitive fabric" that provides identity, governance, and communication at the infrastructure level. Much like a service mesh in microservices, the agentic mesh manages the diplomacy between agents [16]. A modern agentic mesh consists of several layers:

- Agent Runtime: The isolated execution environment (for example, Docker or serverless containers).

- Identity Layer: Unique DIDs (Decentralized Identifiers) for each agent to ensure that only authorized agents exchange sensitive data.

- Communication Fabric: The message bus that supports protocols such as A2A or MCP.

- Model Abstraction: A layer that standardizes access to different LLMs (OpenAI, Anthropic, local models).

- Safety & Observability: Monitoring of decision paths and real-time enforcement of security policies.

A layered diagram is useful here because otherwise the mesh concept becomes too abstract very quickly:

A2A Protocols: The Language of Collaboration

For agents from different vendors to work together, a standardized protocol is required. The Agent-to-Agent (A2A) protocol has emerged as the leading approach. In contrast to MCP, which governs the vertical connection between model and tool, A2A enables horizontal communication between agents [10], [17]. The A2A interaction process is highly structured and resembles a business process:

- Discovery: An agent finds a partner through its Agent Card, a JSON file that describes capabilities and endpoints.

- Negotiation: The agents negotiate conditions (inquiry, proposal, agreement).

- Task Delegation: The actual task is handed off.

- State Tracking: Progress is streamed asynchronously through SSE (Server-Sent Events) or webhooks.

The flow maps directly to a sequence diagram:

A minimal Python schema shows what delegation can look like from the caller agent's point of view:

1import httpx

2

3

4async def delegate_task(agent_card_url: str, task_payload: dict) -> dict:

5 async with httpx.AsyncClient(timeout=30.0) as client:

6 # Discovery: read the target agent's capabilities and policy first.

7 agent_card = (await client.get(agent_card_url)).json()

8 if "financial-analysis" not in agent_card["skills"]:

9 raise ValueError("Target agent cannot process this task")

10

11 # Delegation: then send the actual task to the A2A endpoint.

12 response = await client.post(agent_card["task_endpoint"], json=task_payload)

13 response.raise_for_status()

14 return response.json()This standardization allows, for example, a research agent to commission a specialized finance agent to perform a balance sheet analysis without both agents having to come from the same developer. The integrity of the interaction is supported by signed Agent Cards and other secured identity mechanisms [10], [17].

Human-in-the-Loop 2.0: Orchestrated Interruptions

True autonomy does not mean the absence of humans, but the system's ability to know when it needs help. In a durable architecture, this is solved through dynamic interrupts. Instead of aborting the process abruptly, the agent uses specialized human-in-the-loop interfaces. The workflow state is parked in the durable execution layer (for example, Temporal) and resumed after approval. This prevents autonomy bias, where agents try to solve problems for which they have neither authorization nor contextual knowledge [4].

The pattern is conceptually simple: pause, wait for a signal, continue the exact same workflow:

A Temporal-like workflow shows this orchestration quite clearly:

1from temporalio import workflow

2

3

4@workflow.defn

5class ApprovalWorkflow:

6 def __init__(self) -> None:

7 self.approved = False

8

9 @workflow.signal

10 def approve(self) -> None:

11 # The signal is set externally by a human review UI.

12 self.approved = True

13

14 @workflow.run

15 async def run(self, action: dict) -> str:

16 if action["risk"] == "high":

17 # The workflow waits deterministically for approval.

18 await workflow.wait_condition(lambda: self.approved)

19

20 return "Action approved and executed"The Challenge of State Migration

One often overlooked aspect is code evolution. When we improve the cognitive architecture of a durable agent, existing instances must be migrated. An agent that was started with version 1.0 of a prompt can react inconsistently if it is suddenly confronted with the tools of version 2.0. Managing agent state versioning therefore becomes a core competency in agentic platform engineering.

State migration therefore requires an explicit version path rather than implicit field assumptions:

1def migrate_state(state: dict) -> dict:

2 version = state.get("version", 1)

3

4 if version == 1:

5 # Example: in V2, a string field becomes a structured policy list.

6 state["policies"] = [{"name": state.pop("policy", "default"), "mode": "enforced"}]

7 state["version"] = 2

8 version = 2

9

10 if version == 2:

11 # Example: in V3, the target definition is stored in objective instead of goal.

12 state["objective"] = state.pop("goal")

13 state["version"] = 3

14

15 return state

16

17

18old_state = {"version": 1, "goal": "Analyze login security", "policy": "safe-mode"}

19new_state = migrate_state(old_state)

20print(new_state)Summary and Outlook

Building durable AI agents is not purely a question of model strength, but a systems engineering challenge. The ability to serialize state precisely over days, filter context intelligently, and suppress cognitive drift through formal methods forms the foundation for the next generation of productive AI. Durable agents are transforming the way we work by taking over tasks that previously required human endurance. Whether in cybersecurity, where systems such as PentestGPT v2 or Shannon track vulnerabilities across complex attack paths, or in research, where InfiAgent correlates hundreds of documents, the key lies in persistence. In practice, this means:

- Rely on durable execution (for example, Temporal) to make your workflows crash-resilient.

- Use the virtual actor model to combine scalability with resource efficiency.

- Implement hierarchical supervision structures and formal verification to guarantee reliability.

- Prepare your systems for the agentic mesh by supporting open standards such as MCP and A2A.

The journey from simple ReAct loops to autonomous, durable protocols has only just begun. The architectures described here are the tools with which we will shape that future.

Further posts

- AI-Agents 01 - Beyond Automation: Designing Cognitive Architectures for AI-Agents

- AI-Agents 02 - The Architectural Spectrum of Agentic Systems

- AI-Agents 03 - Self-Reflection in Agentic Systems

- AI-Agents 04 - Architectural Persistence: Efficient Management of Short-term and Long-term Memory in Long-lived Agentic Systems

- AI-Agents 05 - The Architecture of Endurance: Long-Running Context Persistence and Context Optimization in Agentic Systems

References

[1]

Anthropic, "Effective harnesses for long-running agents," Nov. 26, 2025. [Online]. Available: https://www.anthropic.com/engineering/effective-harnesses-for-long-running-agents

[2]

Emergent Mind, "PentestGPT: Automated LLM Pen Testing." [Online]. Available: https://www.emergentmind.com/topics/pentestgpt

[3]

LangChain, "Durable execution." [Online]. Available: https://docs.langchain.com/oss/python/langgraph/durable-execution

[4]

Temporal, "From prototype to production-ready agentic AI solution: A use case from Grid Dynamics." [Online]. Available: https://temporal.io/blog/prototype-to-prod-ready-agentic-ai-grid-dynamics

[5]

Dapr, "Why Dapr Agents." [Online]. Available: https://docs.dapr.io/developing-ai/dapr-agents/dapr-agents-why/

[6]

S. R., "Building Stateful AI Agents at Scale with Microsoft Orleans," DEV Community, 2025. [Online]. Available: https://dev.to/sreeni5018/building-stateful-ai-agents-at-scale-with-microsoft-orleans-4n14

[7]

Alias Robotics, "aliasrobotics/cai: Cybersecurity AI (CAI), the framework for AI Security," GitHub. [Online]. Available: https://github.com/aliasrobotics/cai

[8]

C. Yu, Y. Wang, S. Wang, H. Yang, and M. Li, "InfiAgent: An Infinite-Horizon Framework for General-Purpose Autonomous Agents," arXiv, 2026. [Online]. Available: https://arxiv.org/html/2601.03204

[9]

Anthropic, "Effective context engineering for AI agents," Sep. 29, 2025. [Online]. Available: https://www.anthropic.com/engineering/effective-context-engineering-for-ai-agents

[10]

A. Payong and S. Mukherjee, "A2A vs MCP - How These AI Agent Protocols Actually Differ," DigitalOcean, Mar. 6, 2026. [Online]. Available: https://www.digitalocean.com/community/tutorials/a2a-vs-mcp-ai-agent-protocols

[11]

G. Deng et al., "What Makes a Good LLM Agent for Real-world Penetration Testing?" arXiv, 2026. [Online]. Available: https://arxiv.org/html/2602.17622v1

[12]

NeurIPS, "Efficiently Scaling LLM Reasoning Programs with Certaindex," 2025. [Online]. Available: https://neurips.cc/virtual/2025/poster/116107

[13]

SanMuzZzZz, "LuaN1aoAgent," GitHub. [Online]. Available: https://github.com/SanMuzZzZz/LuaN1aoAgent

[14]

J. Zhou, Y. Sheng, H. Lou, Y. Yang, and J. Fu, "FormalJudge: A Neuro-Symbolic Paradigm for Agentic Oversight," arXiv, 2026. [Online]. Available: https://arxiv.org/html/2602.11136v1

[15]

K. Traxler, "You are the Blackboard - AI Agent Assisted Bug Hunting," Vectra AI, Dec. 10, 2025. [Online]. Available: https://www.vectra.ai/blog/ai-agent-assisted-bug-hunting

[16]

W. Talukdar, "AI Agentic Mesh - A Foundational Architecture for Enterprise Autonomy," IEEE Computer Society, Nov. 3, 2025. [Online]. Available: https://www.computer.org/publications/tech-news/trends/ai-agentic-mesh

[17]

Strands Agents, "Agent2Agent (A2A)." [Online]. Available: https://strandsagents.com/latest/documentation/docs/user-guide/concepts/multi-agent/agent-to-agent/

Contact me

Contact me

You got questions or want to get in touch with me?

- Name

- Michael Schöffel

- Phone number

- Mobile number on request

- Location

- Germany, exact location on request

- [email protected]

Send me a message

* By clicking the 'Submit' button you agree to a necessary bot analysis by Google reCAPTCHA. Cookies are set and the usage behavior is evaluated. Otherwise please send me an email directly. The following terms of Google apply: Privacy Policy & Terms of Service.